|

Tomorrow is the 19th, which means it's time for Expo! We will be presenting Fesentience at the Oakdale Theatre in Walling ford from 8:00 to 3:00. We've been working as hard as we can in preparation for the past week, and we're all excited to finally present ourselves to the judges! We've all put in good efforts so far, so we will do our best to represent our project well.

0 Comments

PBS has come to our school today to interview our Expo group for a series that they are working on called "Spark". Spark is a series that is being made to showcase brilliant projects that are being created by the youth of Connecticut. They are recording the functions of our project for a better understanding as well as interviewing our leader for an inside look at our process in developing our product. We are very excited and honored to have them here today and are looking forward to any collaborations in the future.

Today, our programming team installed the library for the Neon LED system we mentioned in our previous post. The library allows us to fully control the lighting and color of the LED strip. In the meantime, the rest of our group was at work writing the abstract, a paper summarizing the development process and functions of our program. Expo is next Saturday (11 days away), so we are working to make sure our project is as up to date as possible, and ensuring that development continues.

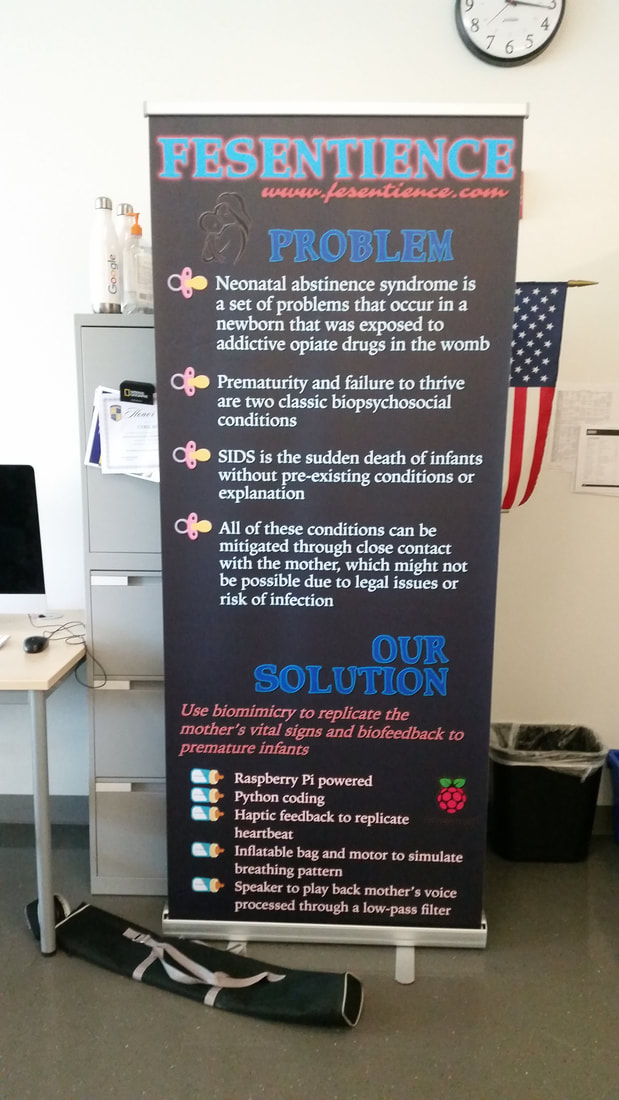

In addition to this we have also just received our new banner. This will act as an official representation for our project while we are presenting at big expo. The finishing touches are being put on the website and everyone is doing their best to perfect elevator speeches for the judges. The power supply for the Neon LED Flex Rope and the resistors for the other LEDs have arrived, and so we are now able to set up the LED system of our device.

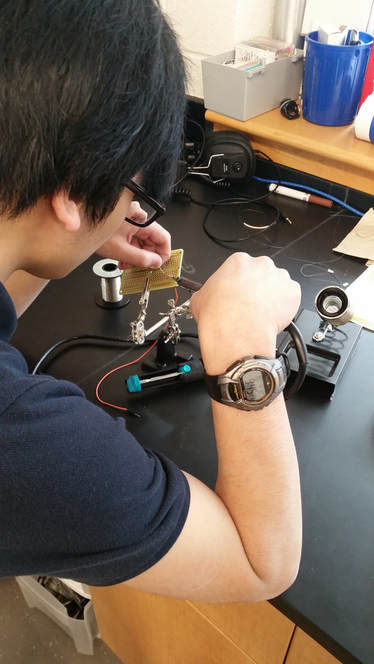

The Neon LED Flex Rope has been powered, and it works. However, it is currently set by default to change to random colors and blink randomly. We will be using the AllPixel library to control when the LED rope is active and inactive from the Raspberry Pi. We will also control the color of the LED Rope and experiment with different RGB values to find the perfect shade of blue for infant development. The LEDs themselves have been tested by connecting them to the Raspberry Pi's 5V and GND pins in series with a 330 ohm resistor, and they are functioning correctly. We have also made sure that we are able to turn them on and off via the GPIO pins of the Raspberry Pi using Python commands. Further soldering will likely be done at some point in order to permanently connect the LEDs and resistors to the Raspberry Pi. On a side note, power supplies for the Raspberry Pis have also arrived, and so we will be able to power the Raspberry Pis directly from the outlet, allowing for more remote usage. Hizami has made significant progress on development of the hardware and software. The haptics system has been attached to the Raspberry Pi, and basic functionality of the haptics has been coded. Activating the "hapticsOn" function will cause the motors to vibrate for a set duration of time, then turn off for a set duration of time, turn on, turn off, etc. in a continuous cycle. Activating the "hapticsOff" function will turn off the motors. Once the resistors and LEDs have been attached to the breadboard, we will also be able to include visual indicators for whether the haptics system is on (using a green light) or off (using a red light). Code for the vacuum pump is also in the late stages, but will require further physical prototype development to ensure it acts as intended. While the connections from the power relays (used to control whether power goes to the vacuum pump or directly to ground) are complete, further modifications will be necessary to ensure these connections are secured. For now, we will be using one vacuum pump to ensure the system works, before trying to add the second one. The vacuum pumps will also have indicator LEDs for when they are activated and deactivated. Today we worked on soldering wires together in order to connect the motion motors to the PCB, so that we can connect it to the raspberry pi for coding our haptic abilities within our artificial womb. Finishing this component is vital to the completion of our project. This will allow us to create the voice portion of our artificial womb, so that the baby will be able to relax when they are restless.

We plan on using the raspberry pi to write a program in python that will play a sound, this is what we are currently testing. Once we have established the ability to play sounds through the raspberry pi, we will then be able to play the audio through a low-pass filter which will make it sound realistic. Tomorrow, we will be working on soldering all of the wiring for the components onto the PCBs so that we can connect them to the Raspberry Pi. Also, we have realized that using one of the vacuum pumps for inflation takes too long to inflate the balloon, and that air would leak out of the deflation pump. While the latter issue could be solved by using a mechanical valve, that does not solve the former problem. So instead of using one pump for inflating the lung and one pump for deflating the lung, we will try using two pumps to inflate the lung and allow it to deflate naturally. For now, here is a short video demonstration in which I use Python commands to cause our vibrational motor to repeatedly turn on and off ("blinking"): One of our group members, Thomas, met up with Professor Van Tassel from Yale who had previously responded to our email and had offered us assistance with Fesentience. He emphasized the importance of the inclusion of the mother's voice in the device. He also recommended that we meet with pediatricians and neonatalogy experts to further our understanding.

In addition to this our parts have arrived and our lead engineer Hizami and the rest of the development team are working on assembling the prototype and programming the Raspberry Pi's. We have tested the vacuum pumps we will be using for our synthetic lung by making them inflate and deflate a balloon. We have also tested the speaker by using a small program we wrote in Python on the Raspberry Pi. Progress update! In order to further develop our project we have assigned specific tasks to students in our class. In addition to this, we plan on collaborating with various professionals and academic institutions. We hope to gain insight that will advance our research and multimedia in order to bolster the effectiveness of our project.

After contacting a number of professors from Yale, we have received an email back from one of them. We plan to arrange a meeting with the professor, in which we will perform a recorded interview. Hopefully, through this process we will be able to gain useful information that will allow us to further develop the scientific and outreach portion of our project. Finally, today is the day we pitch to parents, students, and of course, judges! We intend to represent our project and capabilities to the best of our ability, and we fully intend on moving on to the next stages of this statewide competition and presenting our idea before our rivals at the Innovation Expo! There's much more tweaking that needs to be done to our project, but right now its time to win mini Expo!

|

Expo 2018Blog posts by Niko True, Myles Vincent, and Hizami Anuar |